What Should I Build First for visionOS?

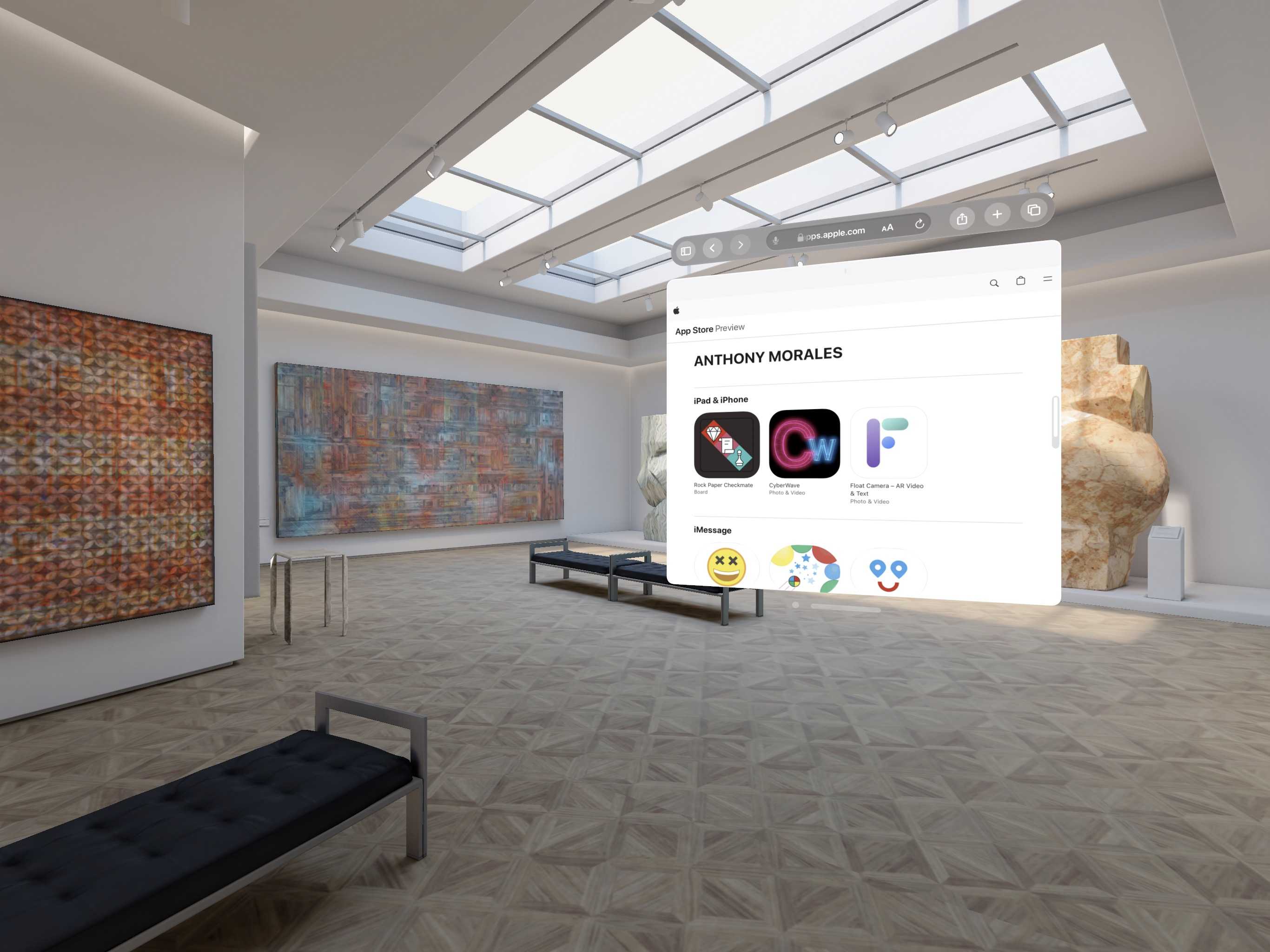

My App Store page in the Vision Pro Simulator

I want to start building for visionOS immediately...but what should I build?

I, like most indie devs, have a litany of harebrained ideas I want to develop. But I can't recall a time when I tried to build something for a platform I've never actually used. Even when I momentarily flirted with the idea of becoming a VR developer, I had an Oculus DK2 to play around with – and I didn't know how to write a single line of code in any language at that time.

Right now there are a mere handful of people worldwide who have gotten their hands on the Vision Pro. I am not one of them. I don't know how it feels to use eye tracking to interact with software; I also don't know how it feels to have my hands simultaneously at my sides and able to control software via subtle gestures. When I watched the iPhone announcement in 2007 I knew I wanted to try this new device. However, it took years of using iOS and developers exploring its capabilities until I better understood what an iPhone is capable of enabling.

But I do know a couple things related to visionOS right now.

I know I want to focus on spatial computing concepts and software. I'm less interested in floating app windows in visionOS; I'm more interested in volumes and immersive spaces. I view apps in floating windows, untethered to anything in reality, as the less interesting stepping stones towards an augmented reality future. Apps in untethered floating windows may be the primary use-case for many people (including myself) in the short-term, but any day of the week I'd prefer to work with software that's aware of a person's surroundings. That's what spatial computing is to me, and that's what I'm interested in. In the context of visionOS, that means I'll be spending plenty of time with a new version of my old friend ARKit since ARKit is required for visionOS scene reconstruction and hand tracking.

I also know I'm not good at idly waiting. I have zero intention of waiting several more months to get my hands on a Vision Pro...to only then commence meditating on which parts of the visionOS SDK and ecosystem to focus on. I want to get started now, so I see two primary paths forward:

- Update some of my old ARKit-based iOS apps for visionOS

- Pick a brand new concept and build something from scratch for visionOS

I'll do both, but I'll start with the former because one or two of the concepts behind my old iOS AR apps are dead simple. I think straightforward concepts are where I should concentrate for these first projects.

I expect to experiment with and build many different types of visionOS apps. Some broad categories of apps may not take off until the second, third, or seventh generation of visionOS hardware is available. Or perhaps it will take a more mainstream (less Pro) version of a headset to instigate an explosion of usage for a certain app category. Or perhaps entire product categories will be enabled, or created, by future releases of visionOS – versions that aren't as locked down and restrictive as the initial v1.0.

Regardless, I'll be focusing on this platform for the foreseeable future so I don't need to be precious about what I build first. Off the top of my head, a few broad categories that excite me are:

- Personalization: personalize a space in AR and "make it your own" via filters, objects, colors, messages, themes, or something else (similar to customizing a homescreen or desktop)

- Purposeful Visualization: change your home decor and style in real-time, view yet-to-be-built stages of a construction site in-situ, or virtually try on clothing

- Experiential Visualization: meditation animations, music-reactive visuals, or "pretty 3D lights"

- Games: multiplayer games that facilitate feelings of presence via Spatial Personas

- Utilities, widgets, and "augments": iOS and macOS recently put widgets front and center, I believe visionOS will follow suit (and that some early visionOS App Store hits will be widgets)

- Accessibility and inclusivity: visionOS brings some previously esoteric technical capabilities to a much wider audience, eye-tracking and built-in hand tracking may lead to new types of American Sign Language software and other products that foster inclusivity

Luckily, I've built three iOS apps in three different buckets listed above.

1. Rock Paper Checkmate is an auto-chess game that uses a custom CoreML model I trained using Create ML – I'll update this eventually, but it's on the backburner because without a Vision Pro in-hand I have too many unanswerable questions about custom hand gestures, multiplayer, etc. 1. CyberWave revolves around visualizing music in augmented reality, a concept I LOVE...however the entire notion may be against Apple's Human Interface Guidelines for visionOS Motion, so this may not be the best place to start 1. Float is about personalizing augmented reality using something everyone has: pictures from their phone

Float is where it all started for me on my AR journey, so it's fitting my visionOS journey starts with Float.

Let's see what it takes to translate a 2017 concept built for ARKit 1 into a visionOS app ready for launch in 2024!